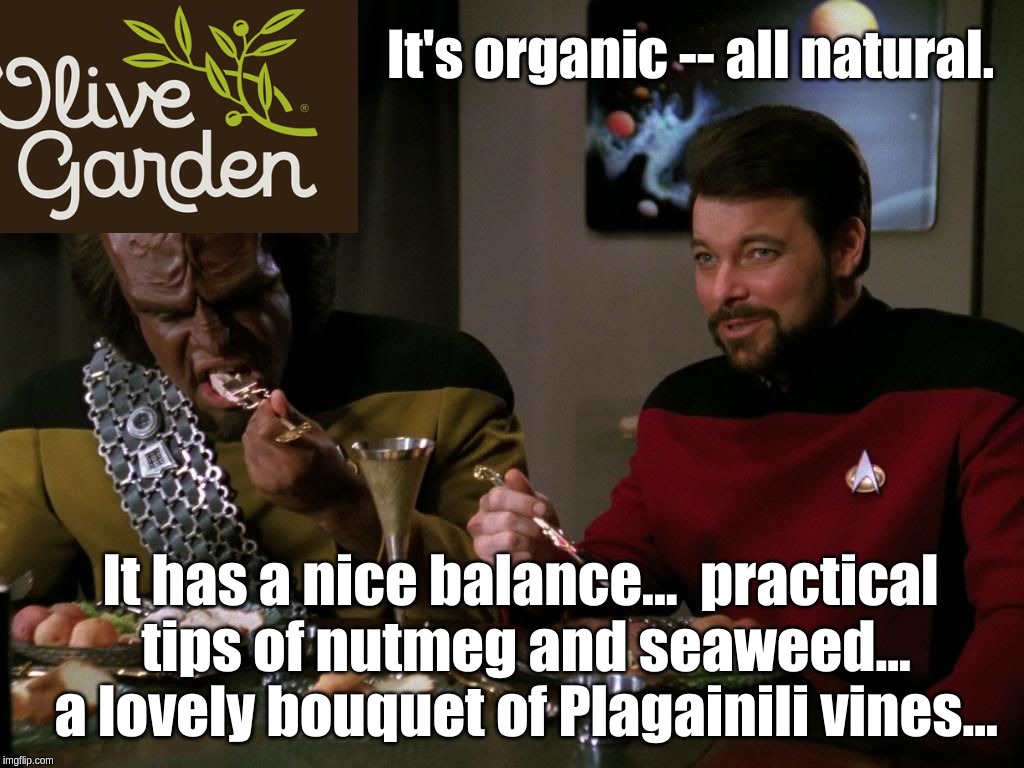

In my last post I trained OpenAI’s neural network on the Star Trek: The Next Generation and Deep Space Nine scripts and had it write some its own.

I used a version that GPT-2 that lets you feed it additional training material. This is why the output looks like a TV script. But even without fine-tuning GPT-2 knows a whole lot about Star Trek because GPT-2 was trained on a huge sample of the internet, and internet knows a lot about Star Trek. So what happens if you ask regular GPT-2 to predict the next word in the sequence This episode of Star Trek: The Next Generation opens with a scene where?

This episode of Star Trek: The Next Generation opens with a scene where The Enterprise's fuel cells are destroyed, and the crew and crew of the Enterprise are not rescued. As they are being evacuated, Picard uses the transporter device to activate the emergency access and communication system, and a rescue crew arrives to rescue the crew.

The transporter device is activated, and the crew is saved. Later on, in a flashback scene, Picard describes how the Enterprise was going to be destroyed: "We had to determine what we could do with the fuel and the crew and the power that we had."

The Enterprise is finally able to reach the planet, and the crew and crew escape. While the power is still available through the emergency access, the power is being used to launch an attack on the Klingon capital.

Continue reading “You Don’t Need to Force a Bot to Watch Star Trek to Write Its Own Episodes” →